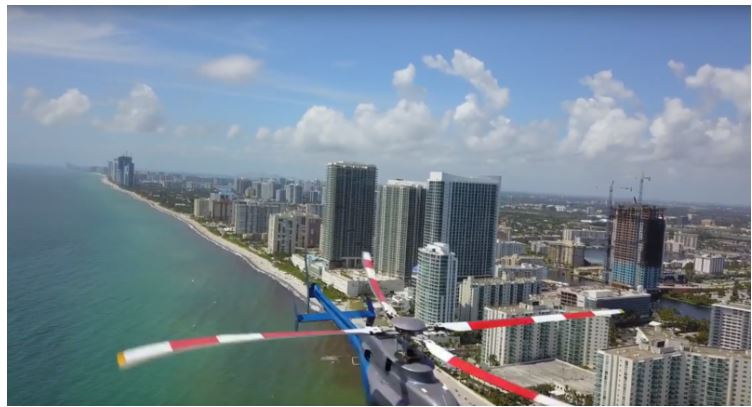

A Deep Insider’s Look at a Rugged Terrain Mission to Investigate a Helicopter Crash with Drones

nce that may have not been seen for various reasons during a site walk-through.

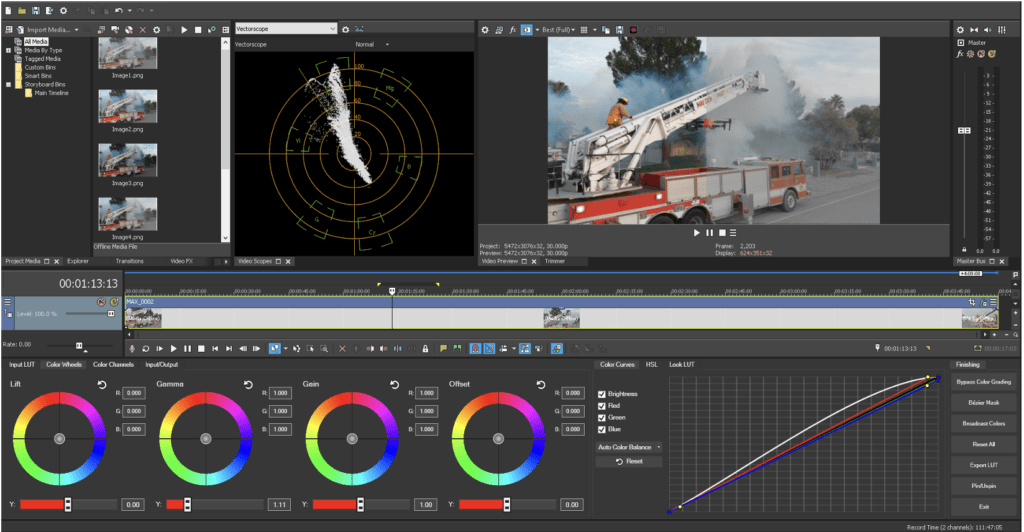

LUTS, Log, 10Bit: Geeking Out on Camera Formats for Drones

LUTS, Log, 10Bit: Geeking Out on Camera Formats for Drones It takes more than just a Part 107 to be a good drone service provider: customers require expertise in production, too. If you’re confused about camera formats for drones and some of the newest options on the market, we’ve got you covered with this deep […]

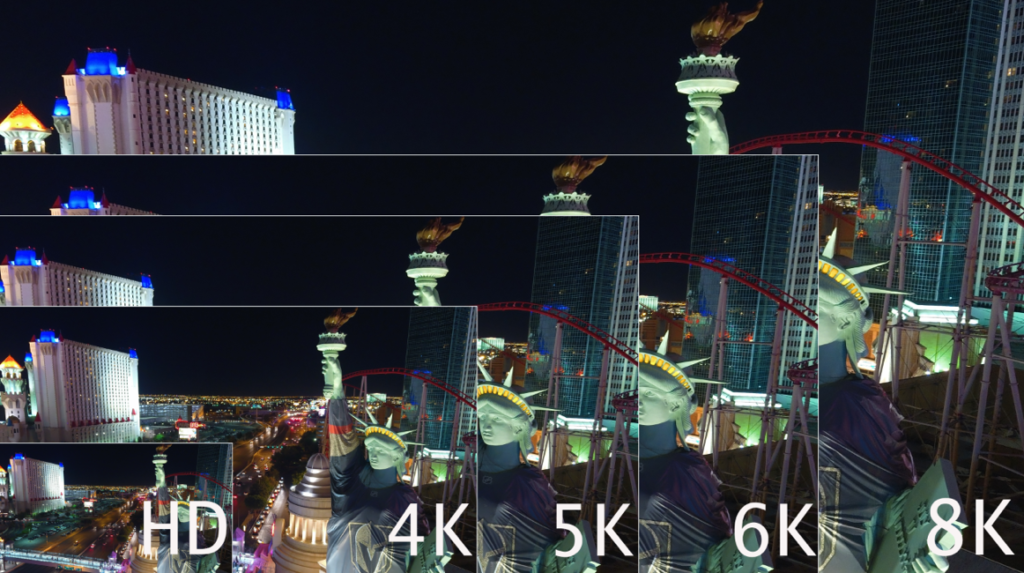

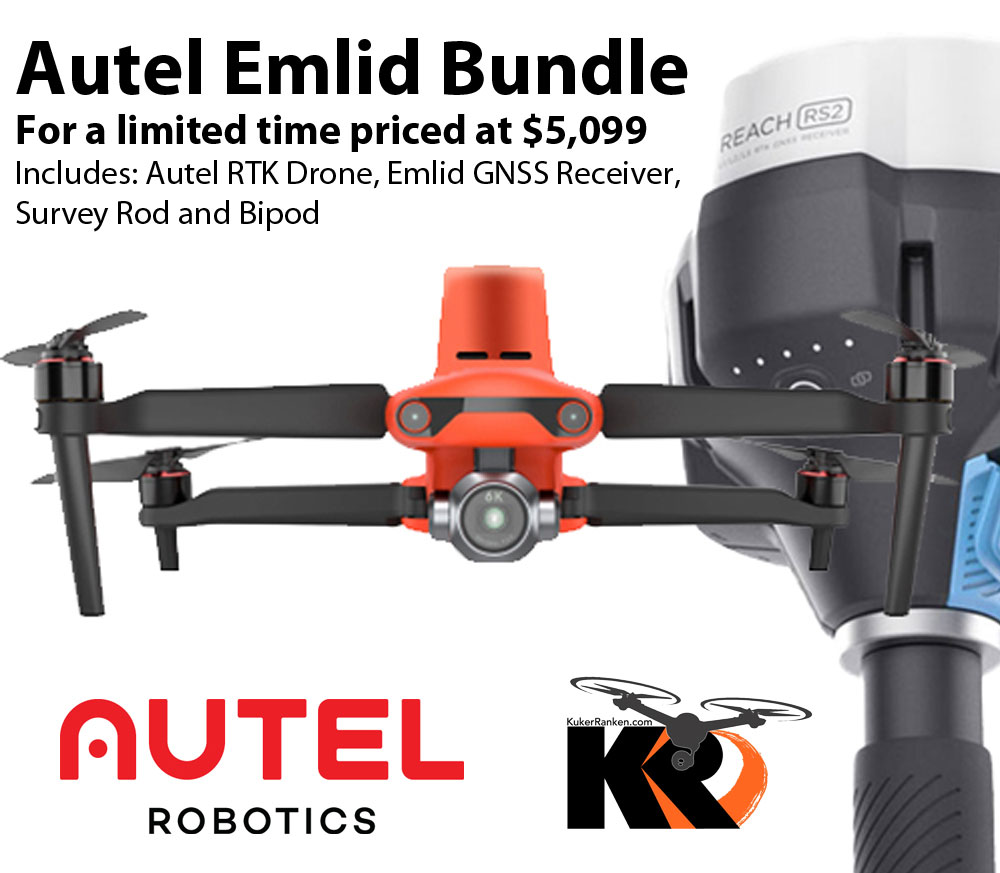

Does the Drone Industry Really Need 8K

We have to roughly quadruplemegapixels to doubleresolution, so the jump from SD to HD makes sense, while the jump from HD to UHD/4K makes even more sense. Following that theme, jumping to 6K makes sense, while jumping to 8K is perfect theory, and nears the maximum of the human eye’s ability to resolve information.

Part 91, 101, 103, 105, 107, 137: WHAT’S THE DIFFERENCE?

All these FARs, what’s a drone pilot to do in order to understand them? Do they matter? YES! In virtually every aviation pursuit except for sUAS, an understanding of regulations is requisite and part of most testing mechanisms. As a result, many sUAS pilots holding a Remote Pilot Certificate under Part §107 are woefully uninformed, […]

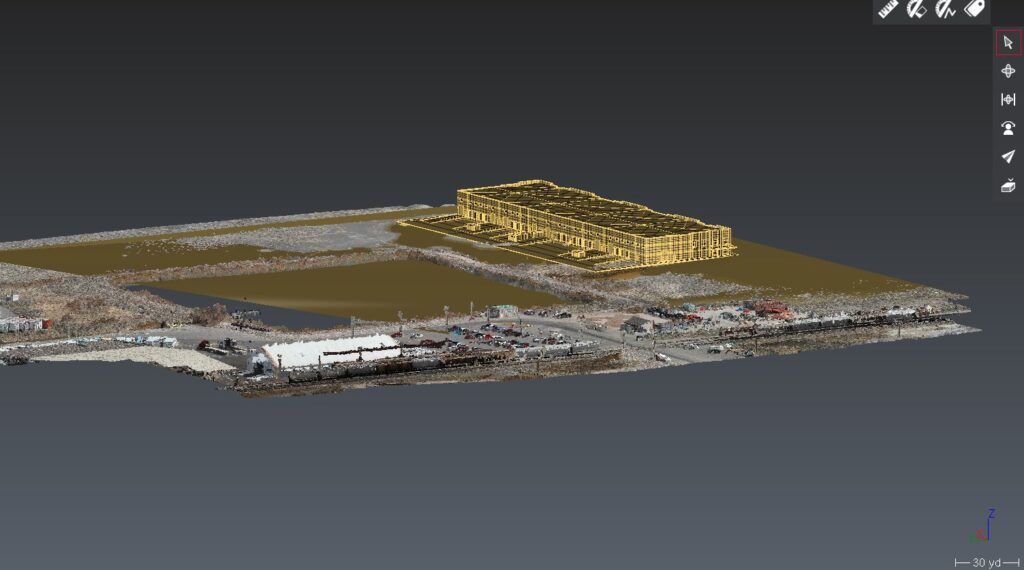

Six ways drones have proven themselves as a tool for the AEC, Surveying, and mapping industries.

Drones and unmanned aircraft in AEC scanning and construction Six ways drones have proven themselves as a tool for the AEC, Surveying, and mapping industries Drones and unmanned aircraft in AEC scanning and construction process are becoming more common. Unmanned aircraft, or drones are becoming much more common on today’s project sites. many companies in […]

[su_button url=”https://www.eventbrite.com/cc/kukerranken-roadshow-2022-815729″ background=”#2defd4″ color=”#000000″ size=”4″ center=”yes”]More Information[/su_button]

[su_button url=”https://www.eventbrite.com/cc/kukerranken-roadshow-2022-815729″ background=”#2defd4″ color=”#000000″ size=”4″ center=”yes”]More Information[/su_button]